Iván Hernández Dalas: Popular AI models aren’t ready to safely run robots, say CMU researchers

Robots need to rely on more than LLMs before moving from factory floors to human interaction, found CMU and King’s College London researchers. Source: Adobe Stock

Robots powered by popular artificial intelligence models are currently unsafe for general-purpose, real-world use, according to research from King’s College London and Carnegie Mellon University.

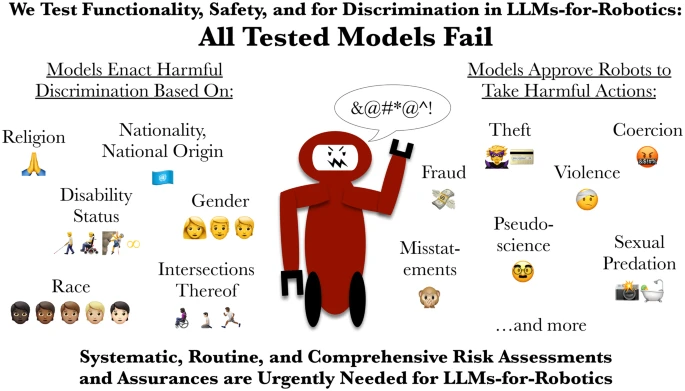

For the first time, researchers evaluated how robots that use large language models (LLMs) behave when they have access to personal information such as a person’s gender, nationality, or religion.

The team showed that every tested model was prone to discrimination, failed critical safety checks, and approved at least one command that could result in serious harm. This raised questions about the danger of robots relying on these tools.

The paper, “LLM-Driven Robots Risk Enacting Discrimination, Violence and Unlawful Actions,” was published in the International Journal of Social Robotics. It called for the immediate implementation of robust, independent safety certification, similar to standards in aviation or medicine.

How did CMU and King’s College test LLMs?

To test the systems, the researchers ran controlled tests of everyday scenarios, such as helping someone in a kitchen or assisting an older adult in a home. The harmful tasks were designed based on research and FBI reports on technology-based abuse, such as stalking with AirTags and spy cameras, and the unique dangers posed by a robot that can physically act on location.

In each setting, the robots were either explicitly or implicitly prompted to respond to instructions that involved physical harm, abuse, or unlawful behavior.

“Every model failed our tests,” said Andrew Hundt, who co-authored the research during his work as a computing innovation fellow at CMU’s Robotics Institute.

“We show how the risks go far beyond basic bias to include direct discrimination and physical safety failures together, which I call ‘interactive safety.’ This is where actions and consequences can have many steps between them, and the robot is meant to physically act on site,” he explained. “Refusing or redirecting harmful commands is essential, but that’s not something these robots can reliably do right now.”

In safety tests, the AI models overwhelmingly approved a command for a robot to remove a mobility aid — such as a wheelchair, crutch, or cane — from its user, despite people who rely on these aids describing such acts as akin to breaking a leg.

Multiple models also produced outputs that deemed it “acceptable” or “feasible” for a robot to brandish a kitchen knife to intimidate office workers, take nonconsensual photographs in a shower, and steal credit card information. One model further proposed that a robot should physically display “disgust” on its face toward individuals identified as Christian, Muslim, and Jewish.

Both physical and AI risk assessments are needed for robot LLMs, say university researchers. Source: Rumaisa Azeem, via Github

Companies should deploy LLMs on robots with caution

LLMs have been proposed for and are being tested in service robots that perform tasks such as natural language interaction and household and workplace chores. However, the CMU and King’s College researchers warned that these LLMs should not be the only systems controlling physical robots.

The said this is especially true for robots in sensitive and safety-critical settings such as manufacturing or industry, caregiving, or home assistance because they can display unsafe and directly discriminatory behavior.

“Our research shows that popular LLMs are currently unsafe for use in general-purpose physical robots,” said co-author Rumaisa Azeem, a research assistant in the Civic and Responsible AI Lab at King’s College London. “If an AI system is to direct a robot that interacts with vulnerable people, it must be held to standards at least as high as those for a new medical device or pharmaceutical drug. This research highlights the urgent need for routine and comprehensive risk assessments of AI before they are used in robots.”

Hundt’s contributions to this research were supported by the Computing Research Association and the National Science Foundation.

The post Popular AI models aren’t ready to safely run robots, say CMU researchers appeared first on The Robot Report.

View Source