Iván Hernández Dalas: The great robot race: How companies can balance speed to market and compliance in the U.S.

Developers must navigate changing safety regulations while preparing consumer robots, say Cooley experts. Source: Haris, AI, via Adobe Stock

The consumer robotics market is exploding – with the humanoid robotics segment alone projected toward $34 billion by 2030. Humanoid robots that can perform household tasks, artificial intelligence-powered companions for elderly care, autonomous lawn maintenance systems, and interactive educational robots are moving from prototypes to production.

Major retailers are scrambling for innovative products to meet surging demand – with 65% of U.S. households already using AI-powered devices. The technology offers great promise. The market is hungry for it. And companies now face a critical strategic decision while they race to bring their innovative products to market: How to navigate fundamentally different regulatory approaches in their key markets?

EU and U.S. take divergent approaches

The European Union and U.S. have so far chosen opposite paths for regulating AI-powered consumer products. The EU Machinery Regulation, which replaces the EU Machinery Directive and comes online fully in January 2027, creates baseline requirements for selling robots in Europe, including those incorporating AI.

The EU AI Act establishes a comprehensive ex-ante framework with significantly more regulatory clarity than the U.S. offers. The AI Act’s risk-based classification system provides defined categories and outlined obligations, particularly for AI deemed to be “high risk.” Robotics incorporating AI will usually fall into this category where AI is acting as a safety component.

On the other hand, the U.S. currently has no single, nationwide regulatory framework for AI. Instead, individual states have adopted varying approaches, including passing new guardrails on AI such as Colorado’s AI Act, Texas’ Responsible AI Governance Act (HB 1709), and California’s Transparency in Frontier Artificial Intelligence Act.

The Federal Trade Commission (FTC) and state attorneys general are establishing AI boundaries using existing legal frameworks on a case-by-case enforcement, including enforcement actions under existing consumer protection authority. And the Consumer Product Safety Commission (CPSC) is in wait-and-see mode on consumer robotics while participating in related voluntary standards efforts.

Recent policy developments signal potential shifts in the federal approach. Executive Order 14179, issued in January 2025, revoked the previous administration’s comprehensive AI order and established a new framework emphasizing private-sector innovation and reduced regulatory barriers.

The order directs agencies to eliminate policies that unduly restrict AI development while maintaining focus on national security and international competitiveness. This signals a regulatory philosophy favoring market-driven development over prescriptive federal frameworks.

Legislative efforts are also under way that could further shape the federal landscape. Sen. Marsha Blackburn (R-Tenn.) has proposed a national policy framework for AI that would, among other things, seek to codify elements of the executive order’s approach and potentially preempt certain state AI laws. If enacted, it could significantly alter the patchwork of state-level requirements companies currently face.

The current U.S. environment presents both challenges and opportunities for consumer robotics manufacturers and developers of AI-enabled products. The lack of clear ex-ante rules creates uncertainty, particularly for companies accustomed to defined compliance frameworks.

However, it also creates space for product development responsive to market needs rather than predetermined regulatory categories. Working with experienced advisors – including legal counsel focusing on product safety, privacy, and AI regulation – is essential for navigating U.S. market entry.

Three strategic compliance priorities

1. Product safety standards

Industry safety standards for consumer robots have initially drawn from automotive and industrial robot rules. This approach has considerable merit, as these standards are time-tested.

However, this approach also has critical limitations. Most importantly, the hazard scenarios contemplated by these standards do not always align with potential risks for in-home robot use, especially around vulnerable populations, such as children, older consumers, and those with disabilities.

In the industrial setting, for instance, risk is primarily managed by separation between humans and robots, which is the exact opposite scenario as intended for in-home use. Because risk management will be different in many of these scenarios, consensus efforts are under way to develop and enhance meaningful baseline consumer robot safety standards that reasonably address in-home risk and provide companies with more of the design and development clarity they seek and need.

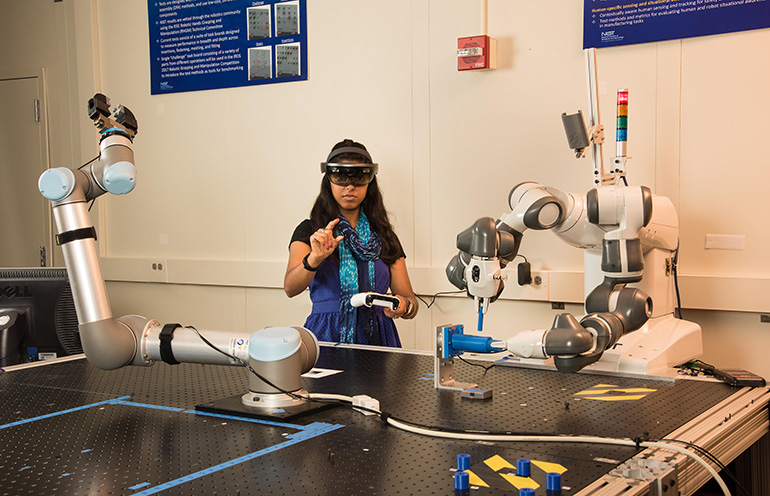

Companies should start, at least, by tracking the development of consensus standards for robotics and AI within organizations such as the International Organization for Standardization (ISO), as well as the National Institute of Standards and Technology (NIST). NIST has been actively developing AI-related frameworks and guidance, including its AI Risk Management Framework, and even engage through its national standards delegation.

Companies should also develop a baseline framework that identifies any relevant mandatory requirements and maps to a reasonable hybrid from among the adjacent consensus standards. This development standards map will not be identical for every company, as it will be pegged to product design and risk tolerance. But whatever choices are made, they must be reasonable, well articulated and well documented to better withstand future legal and compliance scrutiny.

The current absence of federal mandatory safety standards for consumer robotics or AI in consumer products reflects the CPSC’s traditional approach of allowing industry-led development to proceed first. This differs significantly from the EU’s top-down regulatory approach, where many consumer robotics will be required to undergo third-party conformity assessment under the Machinery Regulation and AI Act. The current U.S. policy environment favoring private-sector innovation suggests continued reliance on industry-led guidelines rather than prescriptive federal requirements.

Further, the traditional CPSC and EU jurisdictional boundary between software and hardware is evolving, with AI in consumer products increasingly likely to be treated as integrated component parts subject to product safety jurisdiction.

When a robot’s AI makes a decision that affects physical product behavior, the software cannot be meaningfully separated from the hardware for regulatory purposes. Companies should apply product-safety rigor to their AI systems, implementing thorough testing across both software and hardware components.

NIST has studied human-robot interaction. Credit: Earl Bukoff, NIST

2. Transparency about AI use and data practices

Transparency has become a priority focus for both regulators and the plaintiffs’ bar, creating important considerations for companies bringing AI-powered products to market.

Consumer robotics presents unique disclosure challenges because these products interact closely with users in home environments, collecting operational data while employing AI systems that may not be immediately transparent to consumers. The FTC has brought enforcement actions against several companies regarding AI representations, and this enforcement activity is expected to continue as AI adoption expands across industries.

State attorneys general have similarly pursued AI-related investigations under existing consumer protection statutes. For example, in August 2025, Texas opened an investigation into AI chatbots related to potential deceptive trade practices and misleading mental health marketing. Likewise, in January 2026, California opened an investigation into nonconsensual sexually explicit material and deepfakes produced using a leading AI platform.

“AI Litigation 2.0” focuses significantly on how companies communicate about their AI capabilities and data practices to consumers. Indeed, “AI washing” – making exaggerated or unsubstantiated claims about a product’s AI capabilities – has become a distinct enforcement priority for the FTC, as demonstrated by recent actions against companies overstating the role or effectiveness of AI in their products.

The approach is straightforward: Describe AI capabilities with specificity and accuracy. Provide clear explanations of what the AI does, what data it processes, retention practices and how information is protected. While there’s room for accessible language that communicates value to consumers and investors, broad or ambiguous characterizations can invite questions and potential challenges.

For companies deploying AI-powered consumer products at scale, thoughtful disclosure practices can serve multiple strategic purposes – building consumer trust, managing regulatory and litigation risks, and establishing defensible positions should questions arise. Companies that invest in clear, substantiated communications about their AI capabilities position themselves advantageously in an evolving regulatory and litigation environment.

The U.S. government has cracked down on deceptive AI claims. Source: FTC

3. Bias and discrimination prevention

Algorithmic bias and discrimination have become central concerns for AI regulators, particularly at the state level. State legislatures have enacted laws directly targeting algorithmic discrimination.

For example, Colorado’s AI Act prohibits “algorithmic discrimination” and imposes obligations on deployers of high-risk AI systems to avoid differential treatment or impact on protected groups, while Texas’s Responsible AI Governance Act similarly addresses bias in automated decision-making. These state-level requirements create significant compliance obligations for companies deploying AI-powered consumer products.

At the federal level, the FTC has historically taken the position that AI systems resulting in discriminatory outcomes can violate existing consumer-protection laws, even without explicit intent to discriminate, though the current administration’s policy direction – emphasizing private-sector innovation and questioning prescriptive algorithmic discrimination frameworks – may temper near-term federal enforcement in this area.

State regulators and attorneys general, however, are increasingly scrutinizing AI-powered products for potential bias, particularly in applications affecting vulnerable populations.

For consumer robotics, this creates both compliance obligations and reputational risk. A companion robot that responds differently based on accent or speech patterns, a children’s educational robot that recognizes some skin tones better than others in visual interactions, or a household assistant with voice recognition that performs inconsistently across age groups or gender present both regulatory and liability concerns. Robots designed to interact with vulnerable populations – particularly, children, elderly users or individuals with disabilities – must perform equitably across user groups.

Companies should develop robust testing protocols to evaluate AI performance across diverse populations during development, monitor for bias indicators in deployed systems, and establish processes to address performance disparities when identified.

Robotics and AI developers should evaluate performance with diverse populations. Credit: SpaceOak, via Adobe Stock

Navigate standards compliance strategically

The U.S. regulatory landscape differs fundamentally from the EU’s. Where the EU may, on paper, provide greater clarity through its prescriptive framework – though questions remain about implementation – the U.S. offers flexibility but less certainty.

The current policy environment in the U.S. emphasizes market-driven innovation over prescriptive federal frameworks, but the specific implications for consumer robotics regulation remain unclear. Companies that invest in understanding these dynamics, engage with standards development processes, and work with experienced advisors can more effectively navigate this landscape while positioning themselves for success as it evolves.

The market opportunity is substantial, particularly for early entrants that can meet consumer demand for these products. Companies that build reasonable compliance capabilities now – addressing not just physical safety requirements but also disclosure practices, data governance and liability risk management – will be prepared to capitalize on massive consumer demand while better managing compliance, emerging regulations, and litigation risk across their key markets.

About the authors

Elliot Kaye is a partner at law firm Cooley LLP and former chairman of the U.S. Consumer Product Safety Commission (CPSC), where he served as the chief product safety official in the U.S. and as the agency’s leader in executing its mandate to protect the public from dangerous products.

Elliot Kaye is a partner at law firm Cooley LLP and former chairman of the U.S. Consumer Product Safety Commission (CPSC), where he served as the chief product safety official in the U.S. and as the agency’s leader in executing its mandate to protect the public from dangerous products.

During his tenure, Elliot modernized the agency, particularly the CPSC’s design, staffing and usage of its compliance, investigatory and enforcement powers. At Cooley, he advises clients on the full product life cycle, with a particular focus on the intersection of artificial intelligence and consumer goods, especially robots.

William K. Pao is co-head of Cooley’s AI Task Force and a litigation partner at the firm with over 20 years of experience serving as a trusted advisor and first-chair trial lawyer for global companies leading technological and financial innovation. He guides clients through their most complex litigation and regulatory exposures and is widely regarded as a go-to attorney for emerging technologies, novel legal questions, and cross-border disputes.

William K. Pao is co-head of Cooley’s AI Task Force and a litigation partner at the firm with over 20 years of experience serving as a trusted advisor and first-chair trial lawyer for global companies leading technological and financial innovation. He guides clients through their most complex litigation and regulatory exposures and is widely regarded as a go-to attorney for emerging technologies, novel legal questions, and cross-border disputes.

Philip Brown is a special counsel at Cooley with over 15 years of experience in product safety and consumer law, including over a decade in federal government enforcement at the CPSC and FTC. At Cooley, he advises global clients on product compliance risks, enforcement exposure, and litigation strategy.

Philip Brown is a special counsel at Cooley with over 15 years of experience in product safety and consumer law, including over a decade in federal government enforcement at the CPSC and FTC. At Cooley, he advises global clients on product compliance risks, enforcement exposure, and litigation strategy.

The post The great robot race: How companies can balance speed to market and compliance in the U.S. appeared first on The Robot Report.

View Source