Iván Hernández Dalas: Opentrons introduces dynamic simulation, visualization for AI-generated lab workflows

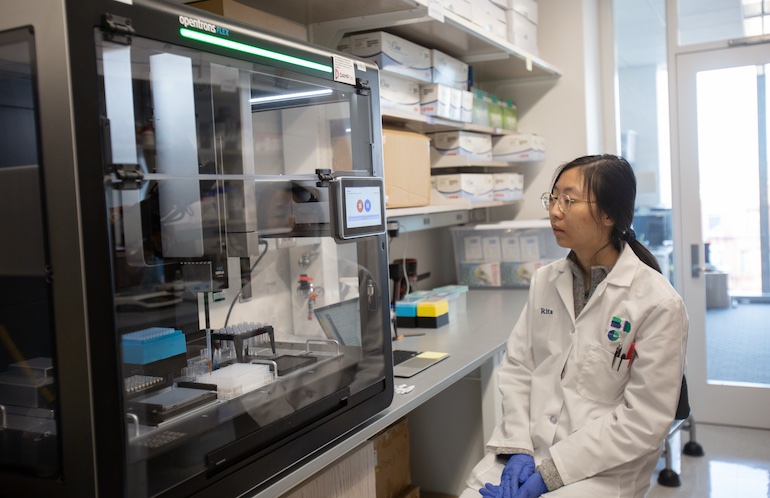

A researcher a Boston University’s DAMP Lab works with an Opentrons Flex robot. Credit: Opentrons Labworks Inc.

Pharmaceutical companies and research institutions are using artificial intelligence to design robotic experiments at scale, but they need to know if AI-generated instructions will execute correctly before handling valuable samples and reagents. Opentrons Labworks Inc. today announced Protocol Visualization for Opentrons Flex, a new simulation and visualization capability.

The feature allows scientists to simulate and inspect robotic protocols in a dynamic virtual environment before running them on the Flex system. The interface enables users to observe each step of an automated workflow.

“This capability gives researchers a dynamic way to simulate and inspect robotic execution before an experiment begins, creating a clearer bridge between computational design and physical laboratory workflows,” stated James Atwood, CEO of Opentrons. “As AI systems propose more experiments, researchers need infrastructure that makes those experiments understandable, inspectable, and repeatable before they reach the bench.”

Founded in 2013, Opentrons said it has more than 10,000 robotic systems deployed globally, including installations at leading research universities and many of the world’s largest biopharma companies.

Visualization tool runs on existing protocols

Opentrons said it supports protocols authored across its software ecosystem, including OpentronsAI, the Python Protocol API, and the Protocol Designer application. Scientists can inspect workflows and observe changes in liquid levels at microliter scale.

The system also includes a Slot Spotlight view that provides additional detail for individual deck locations. This allows users to monitor well volumes and module conditions throughout a run, the New York-based company explained.

For laboratories developing complex automation workflows, this level of inspection may support faster debugging and protocol refinement. Scientists can review workflows offline without interrupting active laboratory operations, noted Opentrons.

The new capability will be available through Opentrons App Version 9.0, scheduled for release in April 2026.

Opentrons CEO explains how lab feature works

Atwood replied to the the following questions from The Robot Report:

Is Opentrons’ simulation and inspection layer hardware-agnostic? If not, is there a specific set of procedures it covers?

Atwood: The simulation and visualization environment is designed specifically for protocols written for the Opentrons Flex robotic platform. The system takes any valid Flex protocol and allows the user to simulate and inspect how the robot will execute it. That includes everything from simple liquid transfers to complex workflows with thousands of robotic actions.

Scientists can step through protocols of virtually any size, from a handful of steps to workflows containing 10,000 or more actions, and observe pipetting, liquid handling, labware movements, and module states before running the experiment. Because the simulation environment mirrors the Flex execution environment, it allows researchers to understand exactly how the robot will behave before committing reagents, consumables, and instrument time.

Why has the pharmaceutical industry been slow to address AI verification problems? How serious are they?

Atwood: Part of the challenge is that much of the expertise required to verify experiments has historically been tacit laboratory knowledge rather than formalized data. A lot of experimental troubleshooting relies on what experienced scientists notice at the bench: how a liquid behaves in a well plate, how a reaction looks as it proceeds, or whether something subtle seems off in the workflow. That kind of observational expertise is difficult to encode directly into AI systems.

In other words, the AI doesn’t know what it doesn’t know. Many of the verification challenges only become visible when experiments interact with the physical world. This is why the industry is now focusing on what we call physical AI: systems that combine language models with perception and real-world data

Instead of relying only on documentation or protocols, these systems increasingly need visual data, sensor data, and execution data from real experiments. The verification challenge is serious, but it’s also solvable as automation platforms generate more structured experimental data and as AI models begin interacting directly with laboratory environments.

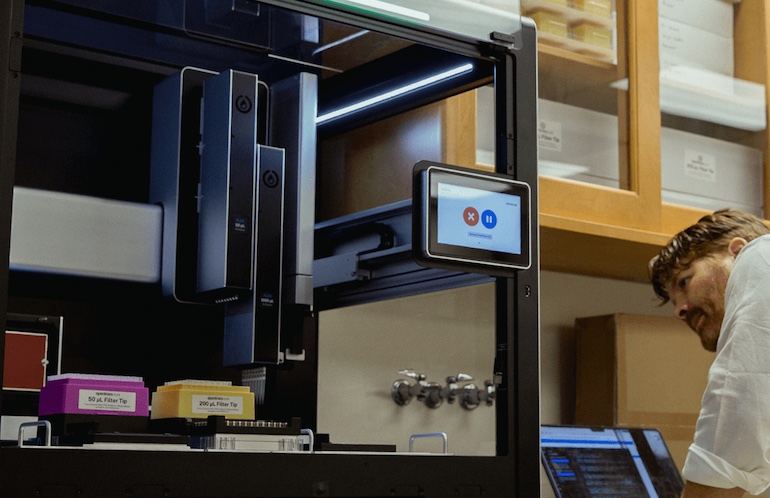

Protocol Visualization for Opentrons Flex is designed to test robotic biopharma experiments at scale before execution. Source: Opentrons

Can you describe how Opentrons’ generative AI works? How do you ensure repeatability and explainability?

Atwood: The generative AI behind OpentronsAI translates scientific intent into executable automation protocols.

At a high level, the system uses large language models combined with a retrieval-augmented generation (RAG) architecture. The models reference Opentrons’ documentation and a large internal knowledge base of laboratory protocols and automation workflows developed and verified by Opentrons over many years.

When a scientist describes an experiment in natural language, the system retrieves relevant examples and structured knowledge from this database and uses that context to generate a protocol suitable for execution on the robot.

Repeatability and explainability come from several layers of control. The protocols generated are fully inspectable Python-based automation workflows, meaning researchers can review, edit, and verify the steps before execution. Structured prompting also ensures that the input captures the information required to produce reliable automation protocols.

In practice, the AI helps scientists move faster from experimental idea to executable workflow, while still allowing human oversight and inspection before the experiment runs.

Did Opentrons work with specific lab automation vendors and end users to develop this offering, and if so, what did it learn?

Atwood: The AI capability was developed with extensive feedback from a cohort of beta users, including researchers building automated workflows on the Flex platform. One of the key insights was that as AI-generated protocols become more complex, researchers need better ways to inspect, understand, and debug workflows before execution.

In parallel, Opentrons is also collaborating with AI and robotics partners, including NVIDIA and HighRes Biosolutions as part of broader efforts to connect AI systems with physical laboratory automation. These collaborations are helping push the ecosystem toward physical AI, where autonomous systems can reason about experiments, interact with robotic platforms, and adapt based on real-world feedback.

How is autonomous science evolving, and what challenges remain?

Atwood: The trajectory is toward increasingly autonomous laboratories, where AI systems can design experiments, execute them through robotics, observe outcomes, and refine future experiments based on the results.

Achieving that requires combining several capabilities:

- Reasoning systems, often powered by large language models, that can plan experiments

- Perception systems such as vision-language models that allow AI to observe what is happening in real experiments

- Physical AI systems that connect those models to laboratory automation platforms so experiments can be executed in the real world

Opentrons provides the infrastructure layer for this development, connecting AI-driven intent to reliable execution on automated lab hardware. The biggest challenge is building reliable feedback loops between digital intelligence and physical experiments. Unlike purely digital domains, biology requires capturing structured data from real laboratory environments: visual observations, instrument outputs, and environmental signals.

There has been rapid progress in this area, including advances in simulation and digital twin environments like NVIDIA Isaac, which help train and test AI systems before they interact with real laboratory hardware.

The post Opentrons introduces dynamic simulation, visualization for AI-generated lab workflows appeared first on The Robot Report.

View Source